We need to correct a misconception.

Docker is not the pair of khakis you remember from your dads’ closet—those are Dockers.

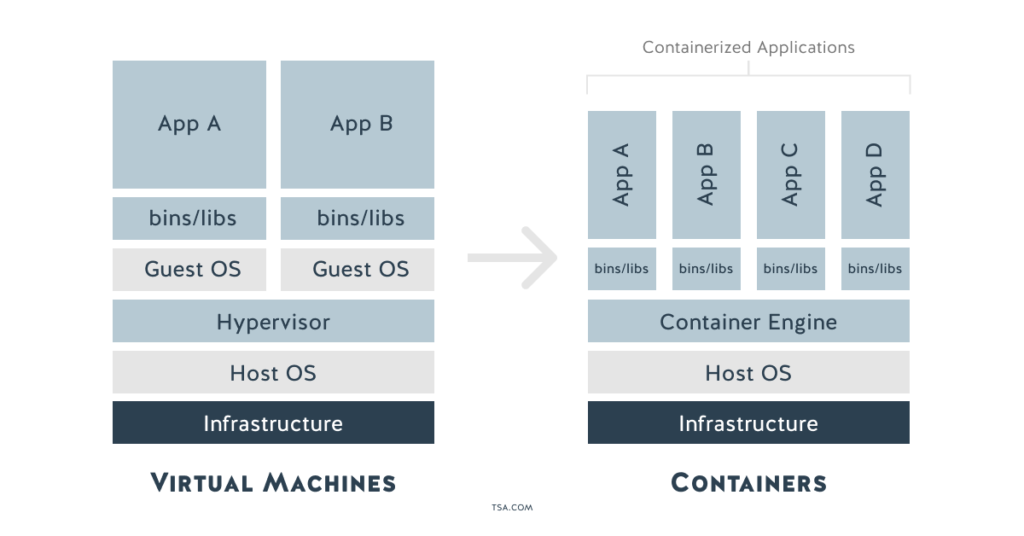

If you grew up on VMs, as I did, you probably think of containers like VMs—tiny, highly portable servers. With this thinking, you’re probably tempted to install your entire OS and application into a container as if you were deploying a VM, but on a new platform. In this case Docker.

You could do this, but you probably shouldn’t.

This worked when we were first transitioning base images from physical servers to VMware, but you lose many of the benefits of containers this way.

Unlike VMs, containers don’t have a hypervisor that emulates hardware. Instead, the container build process creates an immutable layer of software that can be run alongside but isolated from other processes on the same kernel (i.e. they share an operating system).

Containers are designed to be small, and a huge portion of their value comes from keeping them nimble. A good rule of thumb is that each container should do one thing well. Docker containers bake this in at a technical level—they only open a single process on startup.

Containers are one of the ways that people implement a micro-services methodology for applications.

By separating your application into micro-services, you’ll be able to:

- scale each service of an application according to its needs

- version and update versions independently

- host each service or portion of an application wherever it makes the most sense (ex. you may wish to use a small database for local development, but a managed service in production.)

Because you use a dockfile to specify how to build and run the source code for your application, containers also make it easier to support multi-cloud strategies by using that portable container across different web servers.

With that background out of the way, let’s install Docker!

How to Get Started with Docker

Before installing Docker, you should understand that the docker that you can install via the command line with yum install docker is not the same thing as Docker-CE. Docker-CE is the community (free) version that is compatible with the release cycles and management style of Docker-EE. So, for production environments, most CentOS users install Docker-CE. One of the biggest advantages of Docker-CE over the docker available from the yum repository is the ability to configure various storage drivers that affect performance and reliability.

Step 1: Follow this installation guide to install Docker CE.

For security reasons, you’ll want to configure docker to run as a non-root user. You also want to configure it to run at startup.

Step 2: Follow this post-installation guide to increase the security of your installation.

NOTE: You don’t need to configure the remote access portion of the post-install guide yet.

Step 3: Follow this guide to configure your storage driver.

For Docker-CE on CentOS, Docker recommends using overlay2. overlay2 requires xfs filesystem with a special option (ftype=1) or ext4.

You do NOT want to use your default VM disk / for your docker volumes.

Instead, create a 2nd VM disk (/dev/sdb) and format it with either xfs (with ftype=1) or ext4 and mount it to /var/lib/Docker.

You’re ready to go! You can test to see if the installation works (if you haven’t done so already) by running docker run hello-world. Then use docker image ls to see the image you just launched.

How to Create a Docker Container

We originally planned to provide a detailed walkthrough for creating and deploying an application in a container for this tutorial, but, frankly, Docker has done an amazing job with their Getting Started guide. It walks you through everything you need to get started using a simple React-based to-do application.

In the Getting Started Guide you’ll learn to:

- Deploy an app

- Update it

- Share it for distribution (using a public repository, docker hub also offers private repositories)

- Persist the database using

volumesandbind mounts - Run multi-container applications and creating a network between containers

- Define and automate multi-container applications using

docker-compose - Then finally a few tips and tricks about deploying a container

By the end of the guide, you’ll have created and deployed your application locally. However, in the real world, a single container instance isn’t robust enough to support your application in production. For that, you’ll need orchestration (like Google’s Kubernetes, HashiCorp’s Terraform, or Red Hat’s Ansible) to tell your environment how many instances to run and where to deploy them.